Most content strategy frameworks assume one audience. AHQG assumes two — and treats them as structurally different.

In Part 1, I argued that AI retrieval behavior and human search behavior operate on different logic. AI doesn’t search by intent. It retrieves by citability. The asymmetry between these two modes is real and measurable.

Now the question is: what do you do with that asymmetry? You map it.

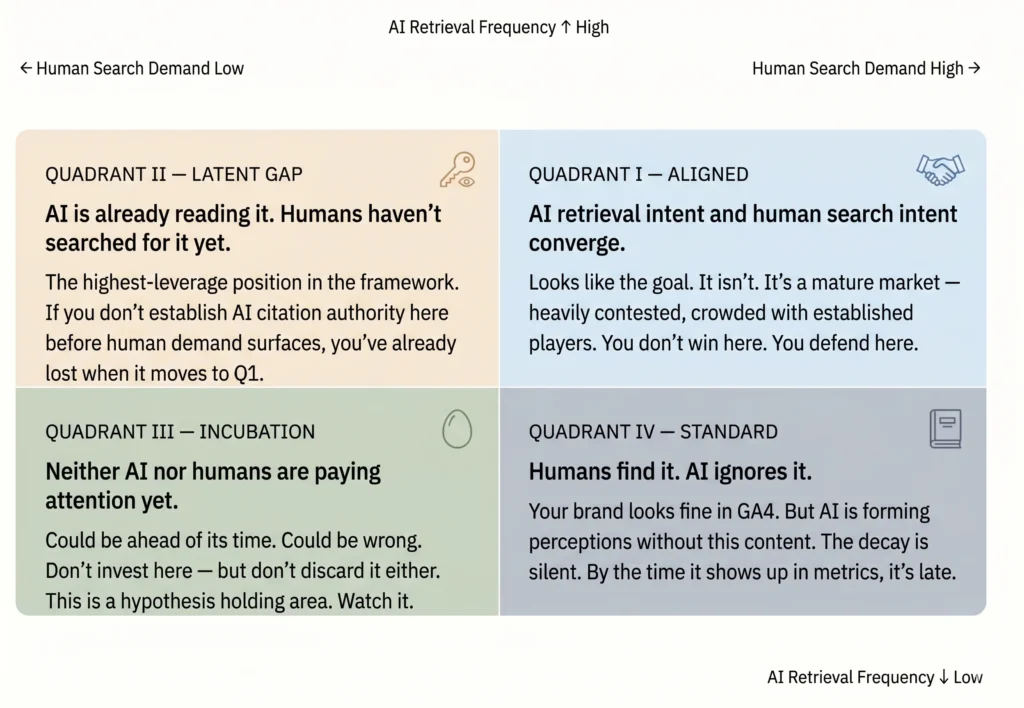

Two axes. Vertical: AI retrieval frequency — measurable via EdgeShaping, which tracks AI crawler access at the CDN layer. Horizontal: human search demand — conventional search volume and intent strength. Cross them, and four quadrants emerge.

The AHQG Matrix.

The top-right is not the goal.

Every 2×2 matrix trains people to aim for the top-right. AHQG is different. Aligned — Q1 — is where everyone already is. The brands with domain authority, the established publishers, the content teams that have been optimizing for years. Entering that quadrant now isn’t strategy. It’s latency.

The strategic move is Latent Gap. AI is already retrieving content in this space — content that answers questions humans haven’t fully articulated yet. Vague intentions. Half-formed problems. The kind of thing that doesn’t generate search volume because people don’t yet have the vocabulary to search for it.

But AI has that vocabulary. It’s been built from billions of human utterances, including the incomplete ones. It searches on behalf of questions that humans are still learning to ask.

And here’s the compounding logic: Latent Gap topics don’t stay latent. They surface. When human demand finally catches up and a topic migrates from Q2 to Q1, the citations are already set. The content that established AI authority early becomes the reference point. Late entries don’t displace it — they cite it.

Standard: the silent decay.

The quadrant that concerns me most isn’t Incubation. It’s Standard.

Standard content performs. It ranks. It converts. GA4 shows healthy numbers. Most organizations look at that dashboard and see no problem. That’s exactly the problem.

Two mechanisms drive content into Standard. The first is intent misalignment — the content was optimized for human search patterns, not AI retrieval logic. The second is structural: most AI crawlers do not execute JavaScript. Content that depends on JS rendering is, for practical purposes, invisible to AI. More precisely: AI tends to interpret a page from its URL path and HTML metadata. The carefully crafted body content may simply not be reaching the model.

GA4 doesn’t show this. Search rankings don’t show this. The signal that something is wrong arrives after the damage is done — when brand recognition in AI-generated responses starts to lag competitors who built for both audiences.

Reading AI’s intent.

There’s a framing choice embedded in AHQG that I want to be explicit about.

I use the phrase “AI’s intent” deliberately. Not because AI has subjective experience, but because AI retrieval behavior has its own consistent logic — and treating it as intentional is the most useful model for designing against it. GA4 was built on the assumption that human behavior has patterns worth measuring. The same is true of AI retrieval behavior. EdgeShaping is an attempt to measure those patterns at the layer where they’re actually visible: the CDN.

AI-Human Query Gap. Both sides of that name matter equally. Drop the human axis, and you’re doing AIO without context. Drop the AI axis, and you’re doing SEO in a world that’s moved on.

Next: How to measure the AHQG — EdgeShaping, user-triggered retrieval logs, and the emergence of Query Phase Analytics as a leading indicator.