EdgeShapingが教えてくれたこと

あるサイトのエッジアクセスログを見ていて、ひとつの非対称性に気づいた。

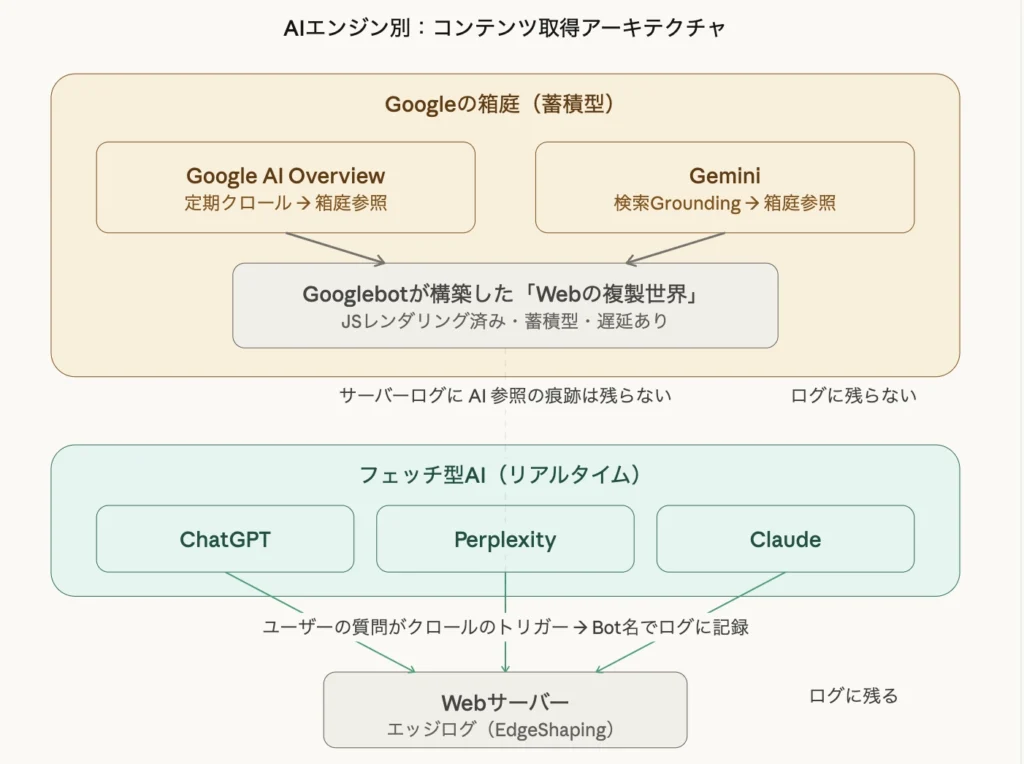

GPTBotが来ている。ClaudeBotも来ている。PerplexityBotも来ている——だがGoogleだけは、AI Overviewで参照されているはずなのに、ログに何も残っていない。

検索クローラーとしてのGooglebotは定期的に訪れている。しかしAI Overviewがそのサイトの情報を引用した瞬間、サーバーには何のリクエストも発生していなかった。

なぜか。答えは、Googleが持つ構造的な資産にある。

Googleの箱庭——「Webの複製世界」という資産

Googleは検索エンジンとして20年以上にわたり、Googlebotを使ってWebを常時クロールし続けてきた。ただ巡回するだけではない。ヘッドレスChromeベースのWeb Rendering Service(WRS)によって、JavaScriptの実行・レンダリングまで含めたページの完全な処理を行い、その結果をインデックスとして蓄積している。

つまりGoogleは、インターネットそのものとは別に、自前で「Webの複製世界」を構築し、維持している。この複製世界——箱庭——が、Google検索の基盤であると同時に、AI Overviewの情報源でもある。

AI OverviewもGeminiも、この箱庭から回答を生成する。Geminiの場合は検索Grounding機能を通じてインデックスを参照する形をとるが、本質は同じだ。ユーザーが質問した瞬間に新たにWebを取りに行くのではなく、既に手元にある蓄積から答えを出す。

だからアクセスログに来ない。冒頭の気づきの構造的な理由がここにある。

他のAIエンジンはアーキテクチャが違う

ChatGPT、Perplexity、Claudeは、Googleとは根本的に異なるアーキテクチャで動いている。

これらのAIは、ユーザーが質問するとその都度Webを取りに行く「フェッチ型」だ。ユーザーの質問がクロールのトリガーになる。だからGPTBot、ClaudeBot、PerplexityBotといったUser-Agentがサーバーログに記録される。

加えて、フェッチ型のAIはJavaScriptのレンダリングに対応していない。Figma CMSやReact SPAのようなCSR(Client-Side Rendering)で構築されたサイトは、HTMLソースにコンテンツが含まれていないため、これらのAIには構造的に「中身が見えない」。

ここに3つの層が浮かび上がる。

| 区分 | クロールの契機 | サーバーログ | JS対応 | 鮮度 |

|---|---|---|---|---|

| Google(AI Overview) | Googlebotの定期クロール(ユーザー質問と無関係) | Googlebotとして記録(AI参照時は残らない) | レンダリング済み | パイプライン依存・遅延あり |

| Gemini | 検索Grounding経由で箱庭を参照(ユーザー質問と無関係に新規フェッチは発生しない) | 残らない(Googlebotのクロールとは区別不可) | レンダリング済み(箱庭経由) | パイプライン依存・遅延あり |

| ChatGPT / Perplexity / Claude | ユーザーの質問がトリガー | Bot名でリアルタイムに記録 | 非対応 | リアルタイム |

同じ「AIが回答に使う情報」でも、その取得経路と可視性がまったく異なる。

箱庭の宿命——鮮度のボトルネック

Googleの箱庭は蓄積の深さにおいて圧倒的な優位を持つ。しかしその蓄積プロセス自体が、鮮度のボトルネックになっている。

クロール→レンダリング→インデックス化というパイプラインには、常にタイムラグが発生する。今日公開したコンテンツがAI Overviewに反映されるまでには、インデックスのサイクルを待たなければならない。

一方、フェッチ型のAIにはこの制約がない。今書いたコンテンツが、今日のうちにAIに読まれる可能性がある。ユーザーの質問がトリガーとなって直接取りに来るからだ。PerplexityがGoogleに対して差別化できている理由のひとつが、この「今」を取りに行ける即時性にある。

とはいえ、このタイムラグは必ずしも数日〜数週間というスケールではない。Googleは2010年のCaffeine以降、インデックスを連続的に更新する仕組みを構築しており、権威の高いサイトであれば数時間レベルでの反映も起きている。箱庭は遅いが、Googleが長年磨いてきた鮮度対応の蓄積も侮れない。

それでも、フェッチ型の「ユーザーの質問がトリガーとなってその瞬間に取りに行く」即時性とは、構造的に異なる時間軸であることに変わりはない。

エッジログから見える景色——EdgeShaping

冒頭の気づきに戻る。

フェッチ型のAIはサーバーログに痕跡を残す。どのAIボットが、いつ、どのページを取りに来たか——これはエッジ(CDNやサーバー)のアクセスログに記録される。

一方、Googleの箱庭参照はログに残らない。AI Overviewでの引用はSearch Consoleで間接的に観測できるのみだ。

この非対称性を前提として、エッジのアクセスログからAIクローラーの行動を可視化し、「自分のコンテンツがどのAIに見えていて、どのAIに見えていないか」を把握する。これがEdgeShapingの出発点であり、価値提案の核にある考え方だ。

ログを読める人間が最初にこの非対称性に気づく。そしてその気づきから、AI時代の可視性戦略が始まる。

結論——SEO対策はAIO対策になる。だがそれだけでは足りない

ここまでの構造を踏まえると、ひとつの肯定とひとつの限界が見える。

肯定:SEO対策はAIO対策に効く。 Googleの箱庭はSEOで適切にインデックスされたコンテンツから構成されている。AI OverviewもGeminiもこの箱庭から回答を生成する以上、SEOが機能していればAI Overviewにも出得る。これは事実だ。

限界:それはGoogleの箱庭の中だけの話だ。 ChatGPT、Perplexity、Claudeはフェッチ型であり、Googleのインデックスを参照しない。JSレンダリングもできない。SEOだけでは、これらのAIエンジンへの可視性は確保できない。

全方位のAI可視性を実現するには、SEOに加えて以下が必要になる。

- SSRまたは静的HTMLによるコンテンツ配信(フェッチ型AIが読める形式)

- 構造化データによる意味の明示

- エッジログの観測による、AIごとの可視性の把握

SEOはAIO対策の必要条件ではある。しかし十分条件ではない。Googleの箱庭の外にもAIの世界は広がっている。その全体像を見るための起点が、エッジログにある。